Overview

This article examines the relative performance of Apache and Nginx web servers to see how they compare.

Please note that the following results should only be used to measure relative (and not absolute) performance, as the tests were conducted locally on the server under common conditions.

Apache and Nginx

- Apache is one of the oldest web servers and is still widely used today. However, it was implemented with a scaling mechanism that can be relatively inefficient in some ways.

- Nginx is a newer web server that was created to address some of Apache's shortcomings.

Both Apache and Nginx are available pre-configured on DreamHost's Managed VPS and Dedicated Hosting platforms.

Testing method

The method to test these web servers uses ApacheBench (an HTTP server benchmarking tool).

- Each test is performed locally from a VPS to remove potentially variable network conditions from the equation.

- In each test, 25,000 requests are made for a 5k PNG file.

- Before each test, the web server was restarted to clear any potential caching issues that may interfere with the results.

- Each test was run with different numbers of concurrent requests to gauge performance at different levels of usage.

The testing command

The following format is used to test where the -c flag increases the concurrency level.

[server]$ ab -n 25000 -c 50 https://www.example.com/dreamhost_logo.png

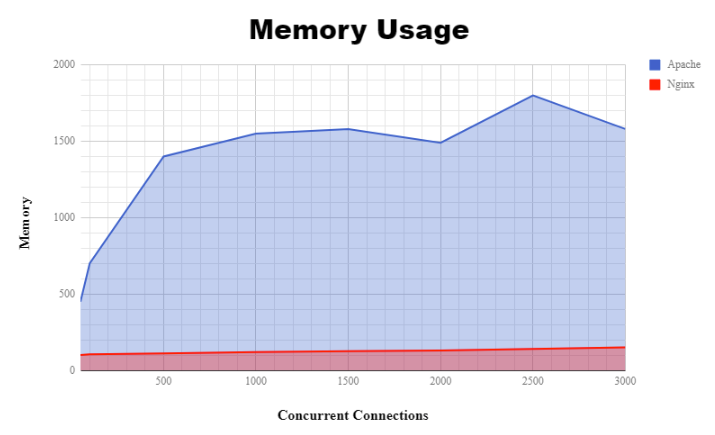

Memory usage comparison

As you can see, Nginx comes out as the clear leader in this test.

The difference has to do with how Apache handles scaling with more incoming requests. To handle additional requests, it spawns new processes.

- As more connections come in, more Apache processes are spawned to handle them. This causes memory usage to grow fairly quickly.

- In comparison, Nginx's memory usage is nearly static.

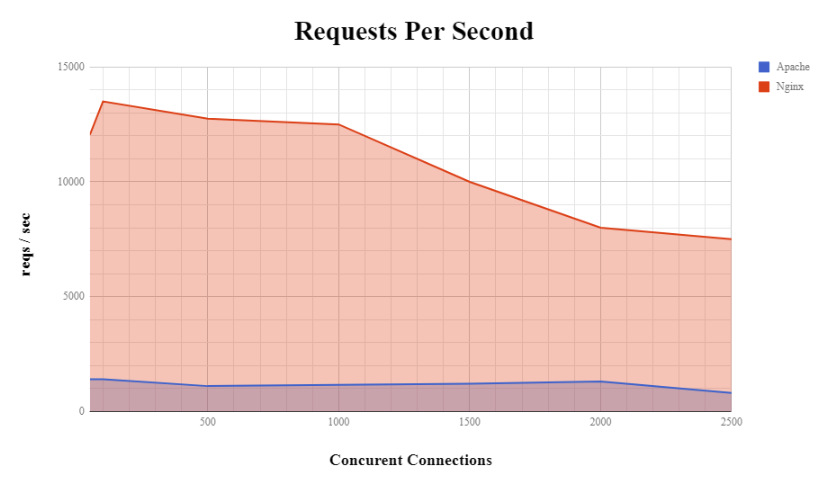

Requests per second comparison

This is a measure of how fast the server can receive and serve requests at different levels of concurrency. The more requests the server can handle per second, the more able it is to handle large amounts of traffic. Once again, Nginx is clearly superior in the raw number of requests per second it can serve.

Which server should I use?

Your choice of server depends on your website's requirements.

- Apache supports a larger toolbox of things it can do immediately and is probably the most compatible with the majority of websites.

- Nginx may be a good choice if your website receives many concurrent hits as it improves memory and overall performance.